I use Windows Live Photo Gallery (WLPG) because it has powerful features for tagging my photos and sorting through them. I depend on its functionality. But I have long been annoyed by its inconsistent performance, and today I investigated.

My sad conclusion is that it appears that WLPG does a lot of extraneous I/O when zooming images, then busy waits for the results. The net result is that if you try using WLPG on a laptop without an SSD you will get poor performance and poor battery life. It is possible to work around this, and double the performance of WLPG, but it’s a tricky operation.

If Microsoft wants Windows to be seen as fluid and fun, like other OSes then it needs to take stuff like this seriously and make sure that avoidable performance problems don’t ruin an otherwise excellent product.

The setup

I frequently notice small hangs (from one to five seconds) when tagging photos or browsing through photos with WLPG. I have a four-core/eight-thread Sandybridge machine with 8 GB of RAM and there is, I believe, no reason for significant delays as I skim through my photos. I eventually found a simple scenario that let me reliably produce a short hang, and this is what I analyzed. It is not the worst hang I have seen – those when tagging photos or rapidly advancing through photos are much longer – but it is the simplest, and I suspect it is representative of the architectural problems that make WLPG not as responsive as it should be.

Zooming in

Zooming in for a closer look on an image is a pretty basic operation. The image is already decoded so it should be fast. And indeed, once I’ve started zooming in on an image the frame rate is excellent – it keeps up with my mouse wheel. But on the initial roll of the mouse wheel on a new image there is a delay of about a second before the first image is displayed. I turned on my ETW input user-provider (yes, it’s a key logger) so that I could see keyboard and mouse events and then recorded a trace.

Rich ETW traces with input data, timer frequency, battery information, optional power draw, and more are available by using UIforETW to record traces.

In the xperfview screenshot below you can see the three diamonds in the Generic Events graph which represent movement of the mouse wheel, and on the CPU usage graph you can see the burst of CPU activity that was triggered.

WPA presents this information differently but the basic concepts are the same.

The zoomed image was not displayed until the end of the CPU activity, so by looking at the time along the bottom we can see that this is about a 1.05 second hang (Xperf Wait Analysis discusses other ways of finding hangs in traces).

CPU consumption, right?

The graph clearly shows that there was significant CPU usage (12.5% represents one pegged core on my eight-thread system), and the summary table shows about 1.2 s of CPU time consumed during the 1.05 second hang (across multiple threads).

So. Slam dunk. The CPU was busy doing hugely important CPU stuff in order to prepare for scaling the image.

Let’s check. Let’s look at the CPU sampling data to see what we find:

- ~652 ms in VCOMP90.DLL!_vcomp::PartialBarrier1_Poll::Block

- ~167 ms in ntkrnlmp.exe!IoRemoveIoCompletion

- ~68 ms in ntkrnlmp.exe!KiPageFault

- The remainder is miscellaneous functions that don’t add up to much

Wait a minute! What’s going on here?

Please don’t busy wait

PartialBarrier1_Poll::Block sounds like busy waiting. Busy waiting is bad form. It wastes energy, it harms battery life, and in some cases it actually harms performance. Don’t busy wait. Spinning for a very short period of time might make sense, but it looks like _vcomp::PartialBarrier1_Poll::Block, which appears to be part of Microsoft’s OpenMP implementation, is busy waiting for more than half a second over a one second period. It’s name and the sampling data make it clear that it is doing nothing valuable. That makes me sad. In addition to being a waste of electricity, busy waiting means that wait analysis doesn’t work which means it is impossible to find out what the thread is actually waiting on. Double fail.

Since we can’t scientifically determine what the code is waiting on we’ll have to guess. The only thing I can be certain it isn’t waiting on is some other CPU bound thread, since every other thread on the system is at least 98% idle during this busy wait.

It’s the I/O

The ntkrnlmp.exe!IoRemoveIoCompletion suggests that I/O might be what is waited on. ~167 ms of CPU time for I/O is impressively large and suggests that a lot of it is being done.

Page faults are I/O too

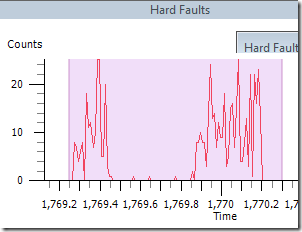

The page faults are easily investigated by looking at the Hard Faults summary table. These are not page faults from memory pressure (I made sure I had more than 2 GB available when running these tests). The hard faults summary table shows that they are all from the file “C:\Users\Bruced\AppData\Local\Temp\~PI6E15.tmp”. So clearly WLPG is memory mapping this file and faulting it in. But where did it come from?

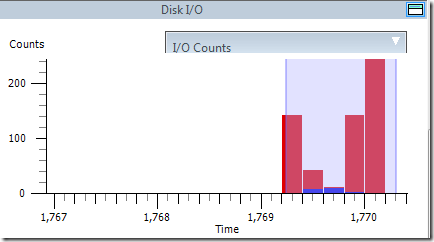

Looking at the disk I/O graph we can see that there was no disk I/O until the selected region, which is when I did the zoom. Clearly the zooming is triggering disk I/O, and this was easily proven through repeated testing. Here we can see the disk I/O triggered by the mouse wheel activity, with nothing happening before the selected region.

Looking at the Disk I/O summary table (and confirming with the File I/O summary table) we see this:

WLPG writes 21.9 MB to this temporary file, then reads back 17.9 MB of it. And it takes about 745 ms to do it.

The images being viewed were all 5184 by 3456, so the data written to the file is too small to be the decompressed image. It is more than enough for a full 24-bit mipmap chain, which would make sense for zooming. The odd thing is that the region of the image being read is different from what is being written. It seems most likely that WLPG is maintaining a large cache on disk, so the writes are flushing out the previous cache and the reads are reading in the new data. Or something like that. Caching mipmaps seems particularly odd since mipmaps can be regenerated faster than they can be loaded from disk.

Unfortunately the loading (of the presumably cached mipmaps) is slowed down by the simultaneous writes. A hard disk can only do one thing at a time.

Browsing images

After realizing that reads and writes to the %temp% directory were the limiting factor in WLPG performance I did some profiling of photo browsing by advancing through images. During a 22.4 second period where 14 images were viewed I measured 12.7 seconds of disk I/O time. Of this disk I/O, fully 80% of the disk I/O time, and 89% of the bytes read and written was spent on traffic to those temporary files. Looking at the CPU usage it is clear that a lot of the delay in switching between images is due to WLPG waiting on this disk I/O, although in this case at least it avoided busy waiting. It seems likely that image browsing performance could be doubled if the traffic to the %temp% directory was eliminated.

The disk I/O time is particularly frustrating because when you are scanning through images in order, WLPG spends about half of its time writing to these temporary files, and never reading from them. Thus, this significant performance cost has no benefit to this common scenario.

Subverting the design for fun and profit

The WLPG designers obviously felt that caching lots of data on disk would improve performance. My tests suggest otherwise. Since I can’t disable the caching scheme entirely I decided to rework it by putting the %temp% and %tmp% directories on a 1.5 GB RAM disk. This is a delicate operation because if I consume too much RAM then Windows will start paging and I’ll replace one disk I/O problem with another, but with an 8 GB system there is room for this to work, as long as I am careful.

It worked, quite brilliantly. The disk I/O time went down dramatically (100 ms for 700 MB of writes, instead of 7,400 ms for 545 MB of writes), and the browsing performance roughly doubled. The remaining delays appear to be from the actual decoding of the images, which is as it should be.

That’s all folks

Due to a lack of symbols in some components (imaging.dll, I’m looking at you) and a lack of source code and architectural documentation I can’t tell exactly what’s going on, but here’s what the trace seems to be telling us.

- WLPG writes some data to a temporary file. The type of data is unknown but is assumed to be mipmaps, probably from the previously viewed image

- WLPG reads from a different portion of the temporary file, through a memory map – perhaps loading previously cached mipmaps?

- During most of this time WLPG is busy waiting for the I/O to be done

- For some reason the Windows disk cache, which usually caches all recently written data, doesn’t help

- Somewhere towards the end of the trace WLPG displays the zoomed image

- The behavior when browsing images is similar except that the cache is only ever written to

So that’s where it ends. Maybe the WLPG team can explain in more detail, or maybe they can fix the problem, by not reading and writing ~40 MB of data to disk during a latency sensitive operation.

Until then I guess I’ll be firing up my RAM disk whenever I have a lot of photo tagging and browsing work to do.

Update

The RAM disk works brilliantly. It exposes a few other performance limitations in WLPG (I don’t think they anticipated that somebody would add 33,000 people tags covering over 600 different people) but overall it makes WLPG feel much more fluid.

Also, I have heard from my unofficial sources at Microsoft that the busy wait in OpenMP is going away in Visual Studio 2012. That won’t actually improve performance (the excessive disk I/O is the real problem) but at least it will avoid the electricity consumption that comes with huge amounts of busy waiting.

And, in 2014 I finally bought an SSD which makes this problem go away. WLPG could have great performance without an SSD, but it currently doesn’t.

Pingback: In Praise of Idleness | Random ASCII

Pingback: Fixing another Photo Gallery performance bug | Random ASCII

Pingback: Windows Slowdown, Investigated and Identified | Random ASCII

Pingback: The Lost Xperf Documentation–Disk Usage | Random ASCII

Ahh, you’ve highlighted my single complaint with Windows Live Photo Gallery. I find it to be a fantastic app, but the constant pauses while I’m just trying to browse through photos annoys the living ‘something unpleasant’ out of me. I’ll have to look into making a ram disc for this problem, but I wish they’d fix it as part of the design of the app

Putting the temp directory on a RAM disk or a fast flash drive does solve some of the stalls. Pre-caching the database before launching WLPG solves others. But even putting both techniques together does not solve all of the stalls. It’s a pity because they are eminently fixable. There is no reason why WLPG can’t avoid 99% of these stalls.

I just noticed there is a new version out. I’ve installed it, but some quick testing shows that it fixes none of the performance problems. There are long hangs after startup, and massive unnecessary disk activity when zooming in on images. Bizarre. Here’s the download link anyway:

http://blogs.windows.com/windows/b/windowsexperience/archive/2012/08/07/introducing-the-new-windows-photo-gallery-and-movie-maker.aspx

Pingback: Basics of Digital Photo Organization | Random ASCII

Agree with this lagging as I browse through photos. Most annoying is, when in the editor, it will show one photo while the adjacent photo is actually active. So if you delete the photo you see, it might be deleting a different photo! Very frustrating. My work around is to scroll/zoom in and back out for every photo…annoying, but not as annoying as trying to get back photos I delete by accident.

Funny, though, is that my old Vista system came w/ this photo editor which is now not available on succeeding windows OS’s as the free download of windows live photo gallery. Somehow I’m guessing some added functionality in programming caused these lags. I’m not a programmer so a lot of your explanation is over my head, but I do notice the issues quite a bit and find it crazy that they had a good program at one time and can’t fix these issues or just go back to the old version until they can.

The technical details aren’t super important, but I can summarize the issues by saying that they are entirely avoidable. The delays when browsing through photos and when zooming in on photos could be dramatically reduced by making some relatively simple code changes to fix the performance bugs. Simply not using the disk would be enough to dramatically improve performance.

Doing a bit more pre-caching (decoding two images ahead instead of just one, on multiple cores) would further improve performance.

A modern PC, even a laptop with a slow drive, is more than capable of moving swiftly through large images. Heck, Windows Explorer manages to browse images very quickly, and that’s not even it’s full-time job!

Pingback: Xperf for Excess CPU Consumption | Random ASCII

Pingback: Xperf for Excess CPU Consumption: WPA edition | Random ASCII

Pingback: Bugs I Got Other Companies to Fix in 2013 | Random ASCII

Pingback: Self Inflicted Denial of Service in Visual Studio Search | Random ASCII

Pingback: ETW Central | Random ASCII

So the program is abandonware now, think you can reverse-engineer it and program it so it’s fixed within its code to what you said was wrong about it, and re-release it?

That sounds challenging. I’m tempted (because I’d hate to lose access to it) but it sounds like a lot of work, and it would be challenging to release it in a way that wasn’t a copyright violation