Xperf (Windows Performance Toolkit, also known as ETW) is a powerful tool for investigating performance issues, however it is a challenging tool to use. Some of this difficulty comes from intrinsic complexity – in order to fully investigate thread scheduling issues, for instance, you need to fully understand the Windows thread scheduler.

This post was updated September 2015 to include information about UIforETW, WPA, and new columns.

However much of the difficulty comes from ‘accidental’ or unnecessary complexity, and included in this accidental complexity is the total lack of documentation of summary table columns. The data represented by these columns is very specific and subtle, however the lack of documentation means that a user of xperf must first reverse engineer the column meanings, build up a mental model, and then start analyzing the performance question at hand. In some cases the aspiring performance expert must even figure out the units for the columns!

Other posts in this series include:

In this post I take on the challenge of documenting the columns for the CPU Usage (Sampled) summary table which displays CPU samples to let you determine where CPU time is spent.

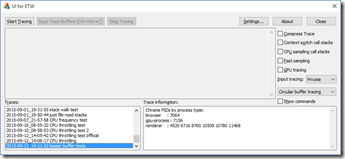

This post assumes that you are recording traces with UIforETW and that the WPA (Windows Performance Analyzer) startup profiles which ship with UIforETW have been installed. You can do this from the Settings dialog by clicking on Copy startup profiles. This post also assumes that you are using WPA 10 to view the traces – this is the default when you double-click on a trace in UIforETW‘s Traces list.

This post assumes that you are recording traces with UIforETW and that the WPA (Windows Performance Analyzer) startup profiles which ship with UIforETW have been installed. You can do this from the Settings dialog by clicking on Copy startup profiles. This post also assumes that you are using WPA 10 to view the traces – this is the default when you double-click on a trace in UIforETW‘s Traces list.

CPU Usage (Sampled)

Xperf has an excellent sampling based profiler built in. Running at a default rate of 1 KHz (but capable of running at up to 8 KHz – just check Fast sampling in UIforETW) the xperf sampler grabs call stacks from across the system, both kernel and user-mode stacks, with minimal overhead. It is far superior to the many SuspendThread/ResumeThread based systems in various open source projects.

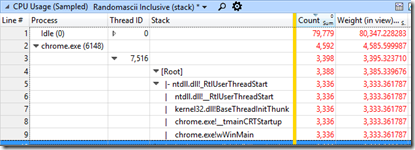

UIforETW’s default startup profile shows the CPU Usage (Sampled) data in table form. There is a graph of sampled data available but the CPU Usage (Precise) graph is more accurate than the CPU Usage (Sampled) graph, so I chose to show the precise graph (based on context switches) instead.

Default columns

The default set of columns in UIforETW‘s startup profile is labeled “Randomascii inclusive (stack)”. It’s columns are:

Process

Process- Thread ID

- Stack

- Orange bar (columns to the left are used for grouping)

- Count

- Weight (in view) (ms)

If you want per-process grouping then hide the Thread ID column. If you want grouping by process name instead of process ID then hide the Process column and show the Process Name column instead. As long as you sort by the Count or Weight (in view) columns the call stacks that consume the most CPU time will be near the top.

Another useful View Preset is labeled “Randomascii exclusive (module and function)”. It’s columns are:

Process

Process- Thread ID

- Module

- Function

- Orange bar (columns to the left are used for grouping)

- Count

- Weight (in view) (ms)

The inclusive view is most useful for attributing samples by call stacks (who called the expensive function) whereas the exclusive view is most useful for seeing what functions consumed the most CPU time all by themselves. In addition to the column modifications suggested for the inclusive view, it can sometimes be worth adding the Address column to the exclusive view, to see where in a function individual samples hit. As long as you sort by Count or Weight the thread with the most samples will be at the top, with the module with the most samples within that that thread at the top, and so on.

Note that the concept of “what instruction was executing when the timer interrupt fired” is not clearly defined for super-scalar out-of-order processors that can have many dozens of instructions in flight. The best analysis of how to interpret the Address column I have seen is found here.

There are other variations that can be useful – most summary table questions are answered by saying “arrange the columns like this…” – but they quickly get esoteric. Once you understand the Zen of summary tables you will know when to use them.

On to the column docs

It is assumed that you have already read the posts explaining how to record a trace and and that you have a trace to investigate.

All of the columns in the sampling summary table show data that is related to a particular sample or samples, and the grouping (the columns to the left of the orange bar) affects how many samples and how many rows are displayed. With the default “Randomascii inclusive” view there will be one row for each process, and as you drill down more rows will be revealed. The grouping by stack is particularly important since it lets you see the big picture more easily.

Any threads or processes that were not sampled during the visible time period will not show up, even if they did briefly run.

Here are the important columns:

- Process Name – this is the name of the process associated with a sample (i.e.; devenv.exe) without the process ID. This column is useful when you want to group all processes from one executable together

- Process – this is the name of the process plus its process ID. This column is useful when you want each process to be grouped separately

- Stack – this is a dynamically explorable view of the call stacks for all the samples in the current group. This column is only useful when it is to the left of the orange column. This column displays inclusive time – when a function shows up in the stack data it means that a sample was collected in that function or one of its descendants, along that particular path – see below for more details

- Module – this is the module in which a particular sample hit. This column displays exclusive time and should rarely be used at the same time as the Stack column

- Function – this is the function in which a particular sample hit. This column displays exclusive time and is often used with the Module column

- Address – this column specifies the raw instruction pointer address of a sample. This column displays exclusive time and is often used with the Module and/or Function columns

- Thread ID – this is the ID of the thread associated with a sample. If you enable this column and position it between Process and Stack then you will be grouping by thread (within each process) which lets you analyze threads separately

- Weight (in view) – this is an estimate of how much CPU time was spent in the current grouped row. It is a statistical estimate because a sampling profiler, by its nature, has no idea what is happening between samples. If you have enough samples and if your code is not synchronized to a timer then it will be reasonably accurate. The units are milliseconds

- %Weight – this is an estimate of what percentage of the total available CPU time was spent in the current grouped row. If you have an eight core (or four-core eight-thread) CPU then 100% represents all threads running all the time, and 12.5% represents one thread running all the time. On multi-core machines this column really isn’t very useful

- Count – this is a count of how many samples were recorded. At the default sampling rate of 1 KHz this number will be very similar to Weight (perturbed by minor variations in sample times) but if the sampling rate is changed then this will no longer map to milliseconds, so you should prefer the Weight column for estimating CPU usage. The main value of this column is to let you know when you have a statistically significant number of samples

- TimeStamp – this is the nanosecond precision time at which a sample was recorded. The units are seconds. Occasionally it is valuable to sort samples by time (you’ll usually have to group by samples) – but not often

- Annotation – this, column, which is available on most or all WPA tables, can be filled in during trace analysis. See this post for more details.

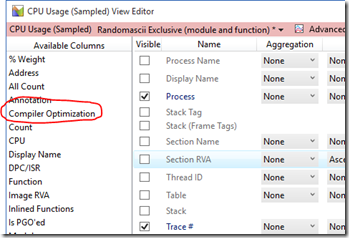

The most important columns are typically available by right-clicking on any column header and checking the column of interest. Some of the more esoteric columns (Image RVA, Is PGO’ed, Compiler Optimization) are only available by going to the View Editor in WPA and dragging from the Available Columns along the left to the column on the right.

The most important columns are typically available by right-clicking on any column header and checking the column of interest. Some of the more esoteric columns (Image RVA, Is PGO’ed, Compiler Optimization) are only available by going to the View Editor in WPA and dragging from the Available Columns along the left to the column on the right.

Here are the generally unimportant columns, documented for completeness:

- Display Name – this is normally the process name but for services it appears to be the lpDisplayName parameter to CreateService

- Thread Start Module – the module where the function passed to CreateThread is located

- Thread Start Function – the name of the function passed to CreateThread

- DPC/ISR – this column specifies whether a particular sample occurred during Regular CPU Usage, DPC, or ISR time. If you suspect that excessive device-driver time is a problem then you can enable this column and drag it to the left to see the grouping, but normally this information is not relevant to those focused on regular CPU Usage

- CPU – this is the CPU that a thread was running on when a sample was recorded. You should rarely care, unless you are working on the OS thread scheduler. Note that WPA 10’s tooltip for this column is incorrect

- Priority – this is the priority of the thread running when a sample was recorded

- Table – this contains the hard-coded value “CPU Usage (Sampled)”

- Section Name – this is the Portable Executable section where the sample hit, typically “.text” for user-mode programs

- Section RVA – this is the Relative Virtual Address of the sample, relative to the beginning of its section

- Image RVA – this is the Relative Virtual Address of the sample, relative to beginning of the DLL or EXE

- Compiler Optimization – this shows what level of optimization the code was compiled to. Take it with a grain of salt, as hand-tuned assembly language showed up as optimized for Size while debug-mode non-optimized C++ showed up as Speed. Potentially you can use this to make sure your hot functions are optimized for speed

- Inlined Functions – this shows what functions were inlined in to another function, which could be important, but since WPA processes Chrome’s private symbols so slowly I always strip them and therefore miss this information. Is it useful? Let me know

- Trace # – this just appears to represent which trace (WPA can load multiple traces simultaneously) you are viewing

Unknown or unclear columns:

- Stack Tag and Stack (Frame Tags) are ways of adding additional meaning to stack frames. They are (lightly) documented here. WPA ships with some stack tags – see “C:\Program Files (x86)\Windows Kits\10\Windows Performance Toolkit\Catalog\default.stacktags” for an example

- Thread Name, Thread Activity Tag, and have unknown meaning and seem to be unused.

- The Source File name and Source Line Number columns look promising, but never seem to show any data

- Rank has unknown meaning but is used, showing values from –1 to 2 in my sample traces on Windows 10

- There are also PGO related columns, sometimes only available in the View Editor. You can find out more about them in this article at Kyle Sabo’s web site

See also this article which explains how to interrupt the sample locations on an out-of-order super-scalar processor.

Pingback: The Lost Xperf Documentation–CPU Scheduling | Random ASCII

Pingback: WPA–Xperf Trace Analysis Reimagined | Random ASCII

Pingback: The Lost Xperf Documentation–Disk Usage | Random ASCII

Pingback: Visual Studio Single Step Performance Fixes | Random ASCII

Pingback: Xperf for Excess CPU Consumption | Random ASCII

Pingback: Xperf for Excess CPU Consumption: WPA edition | Random ASCII

Hi Bruce,

great content.

I just have tried Sample Profiling like

wpr -disablepagingexecutive On

xperf -SetProfInt 10000000 cached

xperf -start ClrSession -on e13c0d23-ccbc-4e12-931b-d9cc2eee27e4:0x98:5 -f “%temp%\clr.etl” -buffersize 128 -minbuffers 256 -maxbuffers 1024 -on LOADER+PROC_THREAD+PROFILE -stackwalk Profile -f “%temp%\kernel.etl” -buffersize 128 -minbuffers 256 -maxbuffers 2048

to take a sample stack every second to be able to let it run for hours. My assumption was that this would enable me to get a call stack of all processes every 1s. But it appears that only very few processes are profiled by this.

I have an issue where a process does hang after 3h for 15 minutes. I have hoped to find out what was going on by using sampling at a very low rate to get the call stacks of all threads in all processes to see where it was hanging.

Is this a possible scenario with xperf?

An alternate route would be to reconfigure profiling for a short time window to take all CSwitch events and to turn it off after e.g. 1s and to turn it on once every minute. That would also greatly reduce the size of the profiled data.

By the way what exactly does COMPACT_CSWITCH drop when combined with CSWITCH which seems to reduce the amount of logged information?

I don’t know the difference between CSWITCH and COMPACT_SWITCH.

Sampling, at any rate, is statistical, and sampling every second makes it particularly likely that you will miss everything interesting.

If a particular process is completely hanging then you should attach a debugger to find out why — that will give you more detail than slow-sampling, including every call stack of every thread in the process.

If it’s a busy hang then record a trace — with the normal sampling rate — when it hangs.

If it’s an idle hang and you don’t want to use the debugger then try recording a context-switch/ready-thread trace (part of my recommended settings) to get the call stacks of where all of the threads are waiting.

From your explanation I see no advantage of recording data for multiple hours.

Yes I know that a debugger would be the best option but unfortunately this does happen on a build machine in India. To get the data I have hoped that the easiest way would be to collect a trace of the full build with a very slow sampling rate.

I will need to take a different route then…

Pingback: Defective Heat Sinks Causing Garbage Gaming | Random ASCII

Pingback: New Version of Xperf–Upgrade Now | Random ASCII

Pingback: Exporting Arbitrary Data from xperf ETL files | Random ASCII

Pingback: Bugs I Got Other Companies to Fix in 2013 | Random ASCII

Pingback: The Lost Xperf Documentation–CPU Usage (Precise) | Random ASCII

Pingback: ETW Central | Random ASCII

Hi,

Thanks so much for all the great info on xperf. I cannot get stackwalk to work on Windows 2012 no matter what I do; it works great on Windows 7. How can I get this to work???

2012, or 2012 R2? 2012 is equivalent to Vista, and has ‘problems’. Many people can’t get stack walking to work until Windows 7/2012 R2.

2012 is the one I need right now. I am going to try the win10 version of xperf to see if that helps.

hello,

I am using WPR to record activities, in my PC i am getting CPU usage sampled in analyser, however in server i am not getting that. Am i doing something wrong? CPU usage sampled is helpful in seeing stacks, where as in server i am capturing and seeing CPU usage precise which does not give me the stack view. Can you please help?

I can’t tell. You could try using UIforETW to record the traces, and to view them. Without that I don’t know what recording settings your using, or what viewing settings you are using.

That is, it might be that you have somehow done something wrong such that wpr isn’t recording sampled data, or maybe there is something on the server that is preventing sampled data form being recorded (rare but possible), or perhaps sampled data is being recorded but you aren’t configuring WPA to display it.

This was causing me lots of grief. I had to install the Intel VTune 2020 profiler and the hardware sampling driver, then it worked.

One view that works beautifully in PerfView, but I cannot find a way to replicate in WPA is Weight/Count by Function *inclusive*. This is very useful for finding functions that are called from multiple different code paths that might use some optimizations. Is there a trick to get such view in WPA too?

If you see a function that you suspect is hot (perhaps after looking at the process/module/function view) then you can right-click it in the stack and select “View Callers/Callees” to give you an inclusive view of all stacks that go through that function. Definitely handy. I use that a lot on heap investigations – view all callers of HeapAlloc or whatever in order to explore outwards from there.

That’s right — but sadly it works only if you already know which function to look at. Process/module/function view will only show exclusive counts inside the functions, so does not help locate the functions that are hot inclusively (e.g. because they repetitively call some 3rd party library API). What PerfView gives is effectively the summary of “View Callers/Callees” for every function in the trace, which allow to sort through all of them. It’s a bummer that WPA does not have something similar. Maybe one day…